On August 1, 2024, the AI Act (Regulation (EU) 2024/1689) entered into force in the European Union, establishing a common framework for the development, marketing, deployment, and use of artificial intelligence systems.

In addition to implementing this regulation, the Spanish government has promoted the Draft Bill on the Proper Use and Governance of Artificial Intelligence, which establishes a framework for oversight, the distribution of responsibilities, and a penalty system—but within the national context.

In this article, we explain what the artificial intelligence law in Spain is, how it builds on European artificial intelligence legislation, who it affects, how it classifies risk, what technological and organizational requirements should be implemented, and what key dates companies should already be monitoring.

- What is the Artificial Intelligence Law in Spain?

- Who is affected by Spain’s AI Act

- How the Act classifies Artificial Intelligence systems

- Technological and organizational requirements for high-risk systems that suppliers must meet

- Timeline for the Implementation of the AI Act in Spain

- Strategic impact of the Law on Technology Companies

- Frequently Asked Questions

What is the Artificial Intelligence Law in Spain?

The Artificial Intelligence Act was passed by the European Parliament in 2024 and marks a milestone as the first of its kind in the world. Spain incorporated the regulation into its national draft bill with the aim of establishing standards and promoting trustworthy AI models.

When we talk about artificial intelligence law in Spain, we are referring to two regulatory frameworks:

- The first and primary one is the European AI Act, passed by the European Parliament and the Council of the European Union, which entered into force in August 2024.

- The second is Spain’s framework for governance and oversight, through which the Spanish government establishes competent authorities and control mechanisms, adapting its institutional structure to the European framework.

The european AI Act: The framework regulation applicable in Spain

El AI Act es la primera regulación integral de la inteligencia artificial a gran escala y adopta un enfoque basado en el riesgo: cuanto mayor sea el potencial de daño sobre seguridad, salud o derechos fundamentales, más estrictas son las obligaciones.

The AI Act is the first comprehensive regulation of large-scale artificial intelligence and takes a risk-based approach: the greater the potential for harm to safety, health, or fundamental rights, the stricter the obligations.

The European Commission proposed the legislation, the European Parliament finally adopted it in March 2024, and the Council of the European Union gave its final approval in May 2024. This resulted in Regulation (EU) 2024/1689, published in the Official Journal of the European Union, which has been implemented gradually.

Considerations:

- For legal purposes, this is the foundational regulation governing artificial intelligence in Spain.

- For EU member states, this regulation applies to companies that develop, integrate, deploy, distribute, or use AI systems, even if they do not build the model from scratch. Consequently, the AI Act assigns obligations to various operators, including providers and deployers.

What spain adds to the European framework

Spain contributes national governance, competent authorities, and institutional adaptations. Key points to note:

- The AESIA (Spanish Agency for the Supervision of Artificial Intelligence) is established as a public body, and its charter is approved by royal decree.

- In March 2025, the Council of Ministers approved the Draft Bill on the Proper Use and Governance of Artificial Intelligence.

Regulation in Spain is also linked to the Charter of Digital Rights, the supervisory role of the AESIA, and the existing compliance ecosystem, particularly the GDPR (General Data Protection Regulation).

Who is affected by Spain’s AI Act

The AI Act applies to creators, suppliers, importers, distributors, and product managers, as well as companies that deploy or use AI systems in internal processes or in interactions with customers. This is particularly true when it comes to high-risk uses or obligations that require consideration of transparency issues.

This includes, for example, organizations that use AI to screen resumes, assess risks, verify biometric identity, or support decision-making in education, healthcare, employment, credit, justice, or access to essential services.

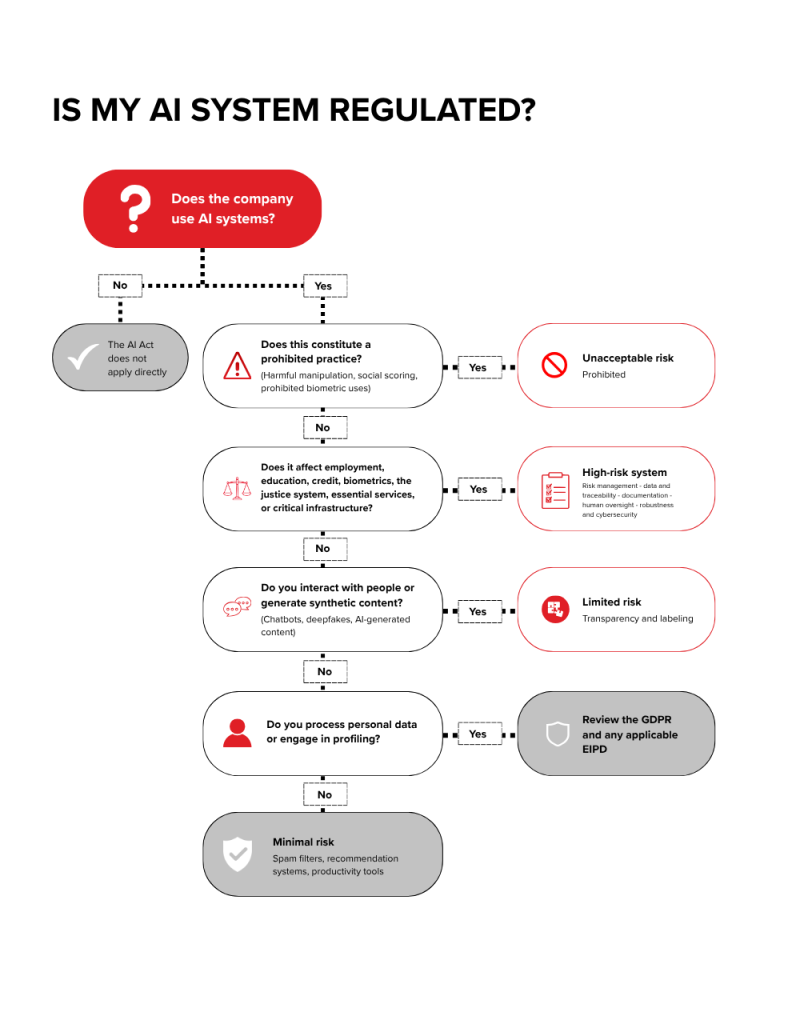

How the Act classifies Artificial Intelligence systems

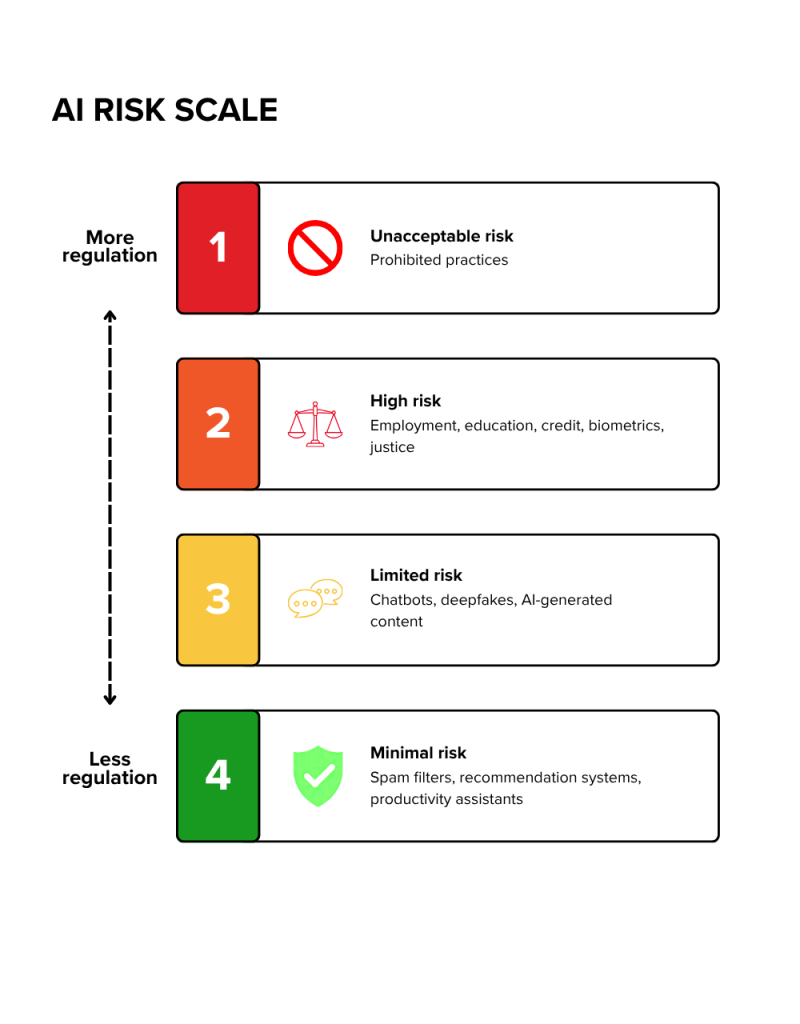

The AI Act provides for a tiered approach to AI assessment, which takes into account the level of risk and, based on that, defines certain restrictions:

- Unacceptable risk

- High risk

- Limited risk

- Minimal risk

This classification forms the technical and legal basis of the regulation. This explains why some practices are prohibited, others must undergo conformity assessments, and others need only comply with transparency obligations.

Unacceptable risk

Practices involving unacceptable risk are prohibited. In February 2025, the European Commission published guidelines on prohibited practices, including examples related to harmful manipulation, exploitation of vulnerabilities, social scoring, and certain uses of real-time remote biometric identification by law enforcement agencies.

High-risk systems

This category includes certain products such as:

- Employment applications (e.g., tools for screening resumes or evaluating candidates)

- Education (such as automated grading or admissions systems)

- Access to essential private services (e.g., scoring systems for granting loans or prioritizing aid)

- Critical infrastructure (such as energy or transportation management systems)

- Biometrics (e.g., facial recognition or identity verification) or the administration of justice (such as tools to analyze evidence or assess procedural risks)

Limited Risk and Transparency Requirements

This category includes:

- Artificial intelligence systems that, while not high-risk, are subject to certain transparency requirements. Examples:

- When a person interacts with an AI system without realizing it.

- When synthetic content such as text, images, audio, or video is generated that could be misleading if not properly identified.

In these cases, the regulation does not impose the same level of requirements as for high-risk systems, but it does require clear disclosure regarding the use of artificial intelligence.

The goal is for users, customers, or citizens to know when they are encountering content generated or manipulated by AI so they can act with full knowledge.

Minimal Risk

Most common AI applications

However, the fact that a solution is not “high-risk” does not eliminate other applicable obligations, such as those arising from the GDPR, sector-specific regulations, or contractual and information security obligations.

Technological and organizational requirements for high-risk systems that suppliers must meet

The AI Act distinguishes between roles and risk levels; however, to achieve this, any organization with some exposure to AI needs a minimum foundation of governance, traceability, security, and oversight to maintain compliance, especially in systems classified as “high-risk”.

You might be interested in the following article: AI Gateway: Smart management between applications, models, and AI APIs

1. Risk Management Throughout the Lifecycle

European regulations require continuous risk management, as detailed in Article 9. To this end, foreseeable risks must be identified prior to commissioning, validated through testing, monitored during operation, and reviewed following incidents, significant changes, or expansions in use.

The recommendation is to implement a governance framework that covers at least:

- Inventory of use cases

- Risk classification

- Case-specific controls

- Responsible parties

- Escalation criteria

- Reassessment procedures when the model, data, or context changes

2. Data Governance, Quality, and Traceability

Much of the bias, errors, and discriminatory outcomes stem from this point. High-risk systems must adhere to appropriate data governance practices, documentation, and quality control for the training, validation, and testing datasets, as mentioned in Article 10.

In countries such as Spain, furthermore, when personal data is involved, the company must align this work with the GDPR, the principle of data protection by design, and, where applicable, with a data protection impact assessment (DPIA).

The AEPD notes that there is a general obligation to conduct a DPIA when the processing poses a high risk to rights and freedoms.

3. Transparency, explainability, and labeling of AI-generated content

This does not necessarily mean making all source code publicly available; however, it does suggest providing useful and proportionate information about the functioning, limitations, and artificial nature of certain content or interactions. This requirement is outlined in detail in Article 50 and in the documentation obligations for general-purpose models and high-risk systems.

Para esto se pueden contemplar:

- To this end, the following can be considered:

- Interfaces that notify users when they are interacting with AI.

- Metadata or labels for synthetic content.

- Model profiles.

- Documentation for integrators.

- Internal guidelines on what can be automated and what must be explained to the affected customer, employee, or third party.

4. Human oversight, robustness, and cybersecurity

Regulation requires human oversight. To this end, it is important to design processes that enable a competent person to determine when to intervene, detect anomalies, and prevent the system from automatically causing or exacerbating harm, as outlined in Article 14.

In addition, the regulations also require adequate levels of accuracy, robustness, and cybersecurity. They also require systems that are resilient to errors or attempts at manipulation.

Here, it is advisable to implement controls such as human review for:

- Sensitive decisions

- Stress testing

- Usage restrictions

- Permission management

- Data pipeline security

- Integration hardening

- Prompt control and protection against manipulation

- Information leakage, or model degradation

5. Documentation, Recording, and Monitoring

One of the most important aspects is documentation. This requires supporting evidence, such as technical documentation, record-keeping, information for sales representatives, and, in certain cases, post-marketing surveillance and incident reports.

Without traceability, compliance is very difficult to demonstrate, as stated in Article 53 of the law.

For this reason, it is important to implement a documentation system as soon as possible that includes: the system’s purpose, datasets used, known limitations, test results, error metrics, incidents, version changes, approval authorities, and usage controls.

Timeline for the Implementation of the AI Act in Spain

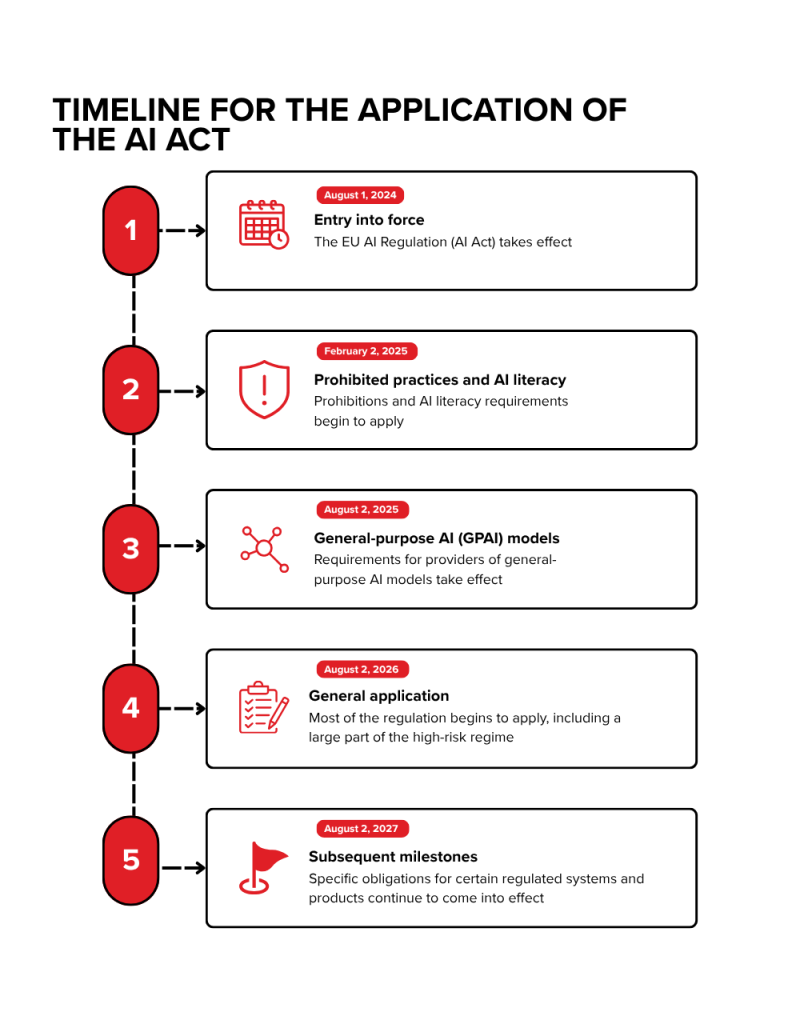

The timeline is not linear: the AI Act entered into force on August 1, 2024, but its implementation is being rolled out in phases. The European Commission notes that it will be fully applicable on August 2, 2026, with significant exceptions before and after that date.

Which obligations are already in effect

- As of February 2, 2025: obligations regarding prohibited practices and the duty to promote a sufficient level of AI literacy among providers and deployers.

- Starting August 2, 2025: obligations for providers of general-purpose AI (GPAI) models. The European Commission has explained this in its official FAQs and in its guidelines for providers: these include technical documentation requirements, information for downstream actors, and additional requirements for models posing systemic risk.

What upcoming milestones should companies keep an eye on?

Starting August 2, 2026, the regime will become applicable, including the requirements and obligations set forth in the regulation for high-risk systems. In addition to the governance and enforcement framework at the European and national levels, the full requirements for high-risk AI systems will be implemented by 2027.

You might be interested in the following article: QA Automation in your APIs with artificial intelligence

Strategic impact of the Law on Technology Companies

In the medium term, as artificial intelligence is regulated in Spain and the EU, companies that best document their models, control their data chain, and demonstrate governance, compliance assessment, and human oversight will have a competitive advantage based on trust and regulatory compliance.

How to Adapt to the AI Act in Spain

To properly adapt to the law, three things must be considered:

- First, map all uses of AI: internal, commercial, experimental, and third-party.

- Second, classify them by risk and regulatory framework: AI Act, GDPR, sector-specific regulations, DSA if applicable.

- Third, close technical and organizational gaps with realistic priorities.

Thus, a mature adaptation plan should include at least:

- Inventory of systems and vendors

- AI usage policy

- Training and AI literacy for teams

- Contract review with vendors

- Data governance

- Traceability controls

- Human review for sensitive processes

- Labeling of generated content

- Impact assessment where applicable and an evidence system for auditing or oversight

Frequently Asked Questions

Is there already an artificial intelligence law in Spain?

Yes, but the main regulation is the European AI Act, which is directly applicable in Spain. In addition, Spain has introduced a draft bill on the responsible use and governance of artificial intelligence to establish national oversight and the division of responsibilities.

Does the AI Act Spain only apply to companies that develop AI?

No. It may also apply to companies that deploy or use AI systems, especially if they fall into high-risk categories or are required to comply with transparency or AI literacy obligations.

What happens if my company uses ChatGPT or another third-party generative model?

It depends on the use case and your company’s role. Even if you don’t develop the model yourself, you may have obligations as a deployer, especially if you integrate it into sensitive processes, generate content for third parties, or process personal data. Additionally, starting August 2, 2025, there will be specific obligations for GPAI providers.

Which systems are considered high-risk?

These include, among others, certain systems used in employment, education, biometrics, essential services, critical infrastructure, immigration, justice, and law enforcement, as well as certain regulated products that incorporate AI components.

What obligations should a company already be addressing today?

At a minimum, two: ensuring that it does not engage in prohibited practices and ensuring that the people who use or operate AI on its behalf have a sufficient level of AI literacy.

How does the AI Act relate to the GDPR?

They do not replace one another. If your AI system processes personal data, the GDPR remains fully in effect. In many cases, you will need to assess the legal basis, data minimization, automated decision-making, profiling, and, if there is a high risk to rights and freedoms, conduct a data protection impact assessment (DPIA).

The Artificial Intelligence Act is establishing a regulatory framework that allows companies to operate under ethical criteria, ensuring transparency in how they adopt, deploy, and monitor AI. At the same time, it focuses on the protection of fundamental rights and sensitive data, especially in cases where the use of artificial intelligence may have a greater impact on individuals.

Are you evaluating your systems and need to define a robust and secure architecture?

Contact our team, and we’ll help you drive your digital strategy with a technology foundation that’s ready for the future.

Official sources

- Regulation (EU) 2024/1689 — AI Act on EUR-Lex

- AI Act — European Commission / Shaping Europe’s digital future

- Official FAQ for navigating the AI Act

- Council of the EU: Final approval of the AI Act

- European Parliament: Adopted text of the AI Act

- BOE: Regulation (EU) 2024/1689 in Spanish

- La Moncloa: Draft Bill on the Proper Use and Governance of AI

- AESIA — official website

- BOE: AESIA Statute

- AEPD — Innovation and Technology

- AEPD — processing operations involving AI

- AI literacy — official questions and answers

- GPAI models — official questions and answers